Multi-line formulas, matrices, sums with limits, integrals, and systems of equations. These constructs are used when documenting algorithms, ML models, and complex computations.

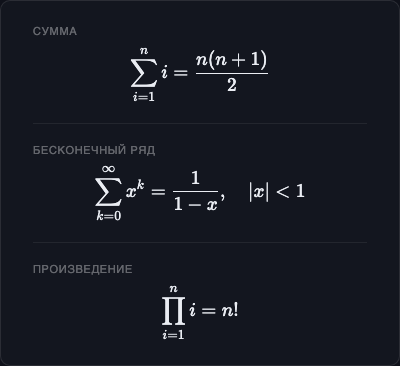

Sums and Products

Sum

$$\sum_{i=1}^{n} i = \frac{n(n+1)}{2}$$$$\sum_{k=0}^{\infty} x^k = \frac{1}{1-x}, \quad |x| < 1$$Product

$$\prod_{i=1}^{n} i = n!$$Double sum

$$\sum_{i=1}^{m} \sum_{j=1}^{n} a_{ij}$$

Integrals

Definite integral

$$\int_a^b f(x)\, dx$$Improper integral

$$\int_0^\infty e^{-x^2}\, dx = \frac{\sqrt{\pi}}{2}$$Double and triple integrals

$$\iint_D f(x, y)\, dx\, dy$$$$\iiint_V f(x, y, z)\, dx\, dy\, dz$$Line integral

$$\oint_C \mathbf{F} \cdot d\mathbf{r}$$The thin space before

dxis added with\,— a standard LaTeX typographic convention.

Limits

$$\lim_{x \to 0} \frac{\sin x}{x} = 1$$$$\lim_{n \to \infty} \left(1 + \frac{1}{n}\right)^n = e$$$$\lim_{x \to 0^+} \ln x = -\infty$$Derivatives

Ordinary derivative

$$f'(x) \quad f''(x) \quad f^{(n)}(x)$$$$\frac{d}{dx} x^n = n x^{n-1}$$Partial derivative

$$\frac{\partial f}{\partial x} \quad \frac{\partial^2 f}{\partial x^2}$$$$\nabla f = \left(\frac{\partial f}{\partial x},\, \frac{\partial f}{\partial y},\, \frac{\partial f}{\partial z}\right)$$

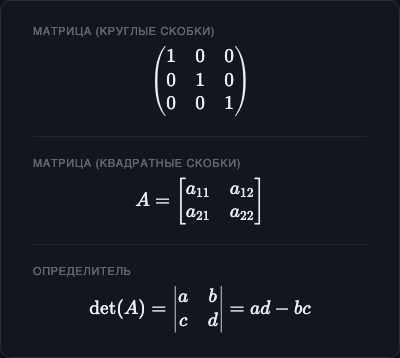

Matrices

Matrices use \begin{...}...\end{...} environments. Rows are separated by \\, columns by &.

No brackets

$$\begin{matrix}

a & b \\

c & d

\end{matrix}$$Parentheses — pmatrix

$$\begin{pmatrix}

1 & 0 & 0 \\

0 & 1 & 0 \\

0 & 0 & 1

\end{pmatrix}$$Square brackets — bmatrix

$$A = \begin{bmatrix}

a_{11} & a_{12} & a_{13} \\

a_{21} & a_{22} & a_{23}

\end{bmatrix}$$Determinant — vmatrix

$$\det(A) = \begin{vmatrix}

a & b \\

c & d

\end{vmatrix} = ad - bc$$Matrix multiplication

$$C = AB = \begin{pmatrix}

a_{11}b_{11} + a_{12}b_{21} & \cdots \\

\vdots & \ddots

\end{pmatrix}$$

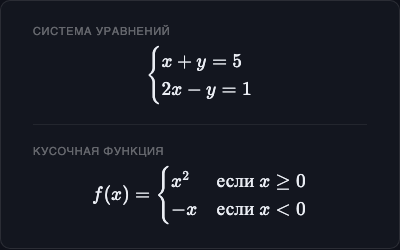

Systems of Equations

$$\begin{cases}

x + y = 5 \\

2x - y = 1

\end{cases}$$$$f(x) = \begin{cases}

x^2 & \text{if } x \geq 0 \\

-x & \text{if } x < 0

\end{cases}$$

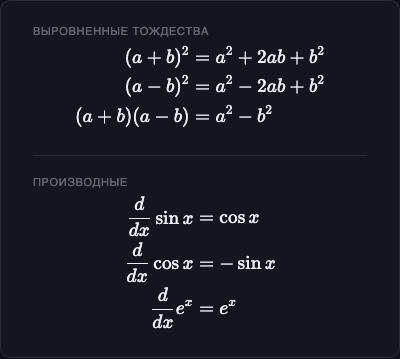

Aligned Equations

The aligned environment aligns multiple lines on the & character:

$$\begin{aligned}

(a + b)^2 &= a^2 + 2ab + b^2 \\

(a - b)^2 &= a^2 - 2ab + b^2 \\

(a + b)(a - b) &= a^2 - b^2

\end{aligned}$$$$\begin{aligned}

\frac{d}{dx}\sin x &= \cos x \\

\frac{d}{dx}\cos x &= -\sin x \\

\frac{d}{dx}e^x &= e^x

\end{aligned}$$

Vectors and Norms

$$\vec{v} = (v_1, v_2, v_3)$$$$\|\vec{v}\| = \sqrt{v_1^2 + v_2^2 + v_3^2}$$$$\vec{u} \cdot \vec{v} = \|\vec{u}\|\|\vec{v}\|\cos\theta$$Math Fonts

| Code | Appearance | Use |

|---|---|---|

\mathbf{x} | x | Vectors, matrices |

\mathit{x} | x | Variables (default) |

\mathrm{x} | x (upright) | Units, text subscripts |

\mathcal{L} | 𝓛 | Loss functions, operators |

\mathbb{R} | ℝ | Number sets |

\mathsf{x} | x (sans-serif) | Special notation |

$$\mathbf{y} = \mathbf{X}\boldsymbol{\beta} + \boldsymbol{\varepsilon}$$$$\mathcal{L}(\theta) = -\frac{1}{N}\sum_{i=1}^{N}\log P(y_i \mid x_i; \theta)$$Practical Examples

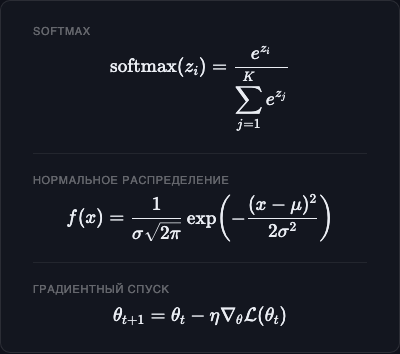

Softmax

$$\text{softmax}(z_i) = \frac{e^{z_i}}{\sum_{j=1}^{K} e^{z_j}}$$Normal distribution

$$f(x) = \frac{1}{\sigma\sqrt{2\pi}}\exp\!\left(-\frac{(x-\mu)^2}{2\sigma^2}\right)$$Gradient descent

$$\theta_{t+1} = \theta_t - \eta \nabla_\theta \mathcal{L}(\theta_t)$$Big-O notation

$$T(n) = O(n \log n)$$Full Bayes’ Theorem

$$P(H \mid E) = \frac{P(E \mid H)\, P(H)}{\sum_{i} P(E \mid H_i)\, P(H_i)}$$